ProCave, short for Procedural Cave, is a solo custom engine project that I’ve been working on in the background last year studying at BUas.

I am trying to make a game where you walk around in an environment that constantly changes around you. Entrances and exits opening up around you. While you stumble through trying to find a way through

Realtime mesh generation

To generate the environment I opted to use marching cubes after taking some inspiration from an NVIDEA article wherein they describe the process of generating meshes off of noise.

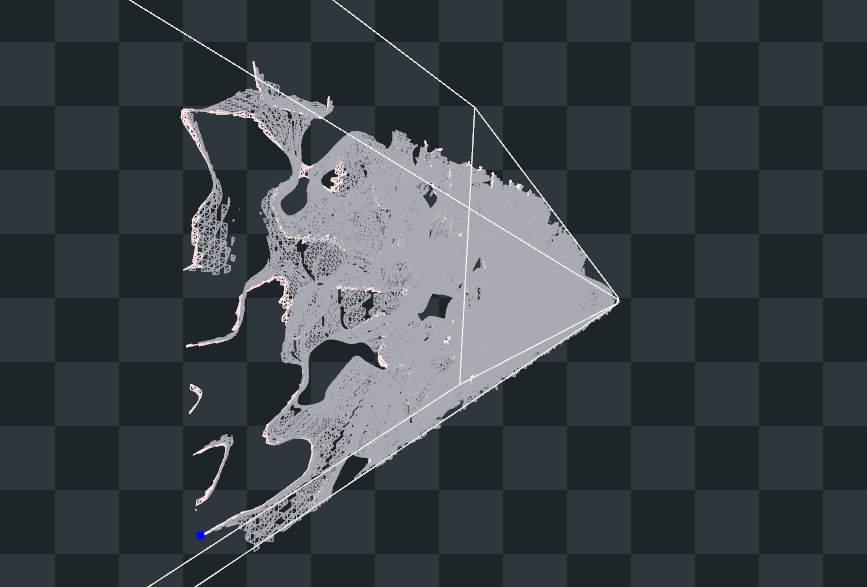

I started by getting a grasp on the theory by implementing simple marching cubes, generating a block of terrain on the CPU, as seen spinning on the right.

Then I set out to move all this onto the GPU, creating a geometry shader to handle the rendering on the GPU side. Using HLSL I made an implementation to generate cubes in a square around the player, sampling 3d Simplex noise.

One of the first pieces of noise generated with marching cubes on the CPU, And then the the first correct mesh spit out by the GPU

4d Noise Syncing

In order to eventually get all gameplay elements synced with the visuals, I needed some way to get all the environment info synced across the CPU and GPU.

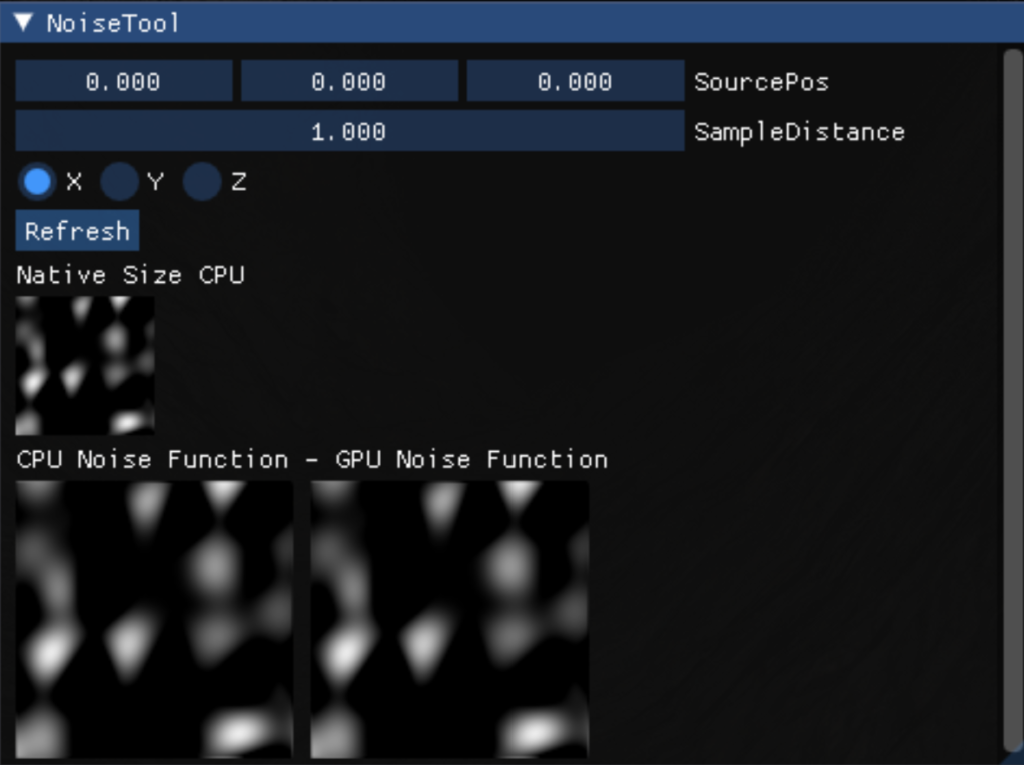

So after porting a Simplex noise generation code snippet from the GPU to the CPU. I needed to confirm whether everything was working. For which I created a quick tool using Imgui. Taking a slice of the noise generated on the CPU, rendering it to a texture, and doing the same on the GPU side. I could compare the noise generated on both sides for irregularities.

Collision

Now that the noise is synced across both processors, I can start using it on the CPU side to generate environment collision. Plugging in the marching cubes mesh generation code from before into ReactPhysics3D to generate meshes and simulate objects.

Making the cave move like this is as simple as replacing the 3d noise function with a 4d implementation

Triplanar Mapping

Now to make a bunch of visual improvements, I was getting quite done staring at a beige blob. So it was time to apply some global space triplanar mapping. Simple enough to apply, no need for UV mapping either because we can just use global space.

Then I use this to set up textures, normal mapping, some lighting, and depth fog. To generate the normal map, the noise is samples a few extra times, to find what direction the noise ‘gradient’ is pointing. That will be our normal direction.

Optimizations

At this point, all this was taking its toll on performance, And in order to be able to keep running at 60 fps. I implemented frustrum culling. Now the noise is sampled about 16.66% times as often as before